|

The statistical sample set was millions of games played.

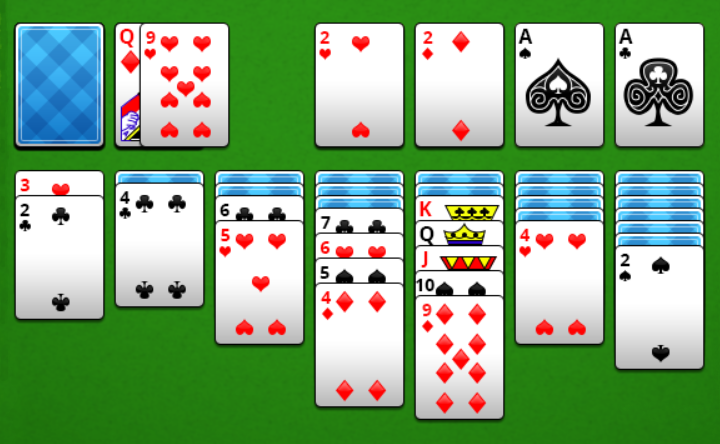

The results are about one win in 5.4 games played or 18.5% of games are won. My version has infinite number of go rounds on the play stack (no limit of three re-deals) and draw 3 cards. I put together a C program to try and figure out what the odds are. Ignoring those which do not produce a result quickly enough leads to the wide reported range for the probability. But even then, the consequences of different decisions in the game can sometimes have such far reaching and complicated consequences that not all initial permutations can be found to be solvable or not within a reasonable time. Doing this trims the decision tree and so shortens the time needed to investigate different possibilities. To deal with the excessive number of choices, you can apply heuristics which provide good methods for taking decisions without investigating every final result. To deal with the excessive number of permutations, one approach would be to take a random sample and to use statistical techniques to provide steadily narrowing confidence intervals around the estimates as the sample gets bigger. In practice neither of those two options are practical. So in theory it might be possible to look at all $52!\approx 8 \times 10^$ permutations of the cards and for each one (or for a eighth of them, taking account of the equivalence of suits) see whether it is possible to solve that case or not with any of the many combinations of choices by looking at every combination of choices. Klondike Solitare where the positions of all 52 cards are known. The numbers you quote are for "Thoughtful Solitaire", i.e. Inability to calculate the odds of winning a randomly dealt game as “ one of the embarrassments of applied mathematics” (Yan et al., 2005). Klondike Solitaire has become an almost ubiquitous computer application, available to hundreds of millions of users worldwide on all major operating systems, yet theoreticians have struggled with this game, referring to the I would like to end this question with a couple of lines from the paper (emphasis by me): There seems to be some programming going around, but what is the big idea behind their approach to the question? I tried reading the paper, but I'm too far away from those lines of thinking to understand what they're talking about. It came as a surprise to me that the answer is not really known, and that there are only estimates. The reference for the thresholds is this paper by Ronald Bjarnason, Prasad Tadepalli and Alan Fern. The number of unplayable games is 0.25% and the number of games that cannot be won is between 8.5-18%. In the same wikipedia link, it is stated thatįor a "standard" game of Klondike (of the form: Draw 3, Re-Deal Infinite, Win 52) the number of solvable games (assuming all cards are known) is between 82-91.5%. How does one even begin to find the number of solvable games?

I couldn't even begin to figure out how would one go solving this problem! Immediately my interest shifted from the answer to the above question, to the methods involved in answering it. I have no probability formation (save for an introductory undergraduate-level course), but anyway I started thinking on how could the problem be tackled.

When I came up with the question, it seemed a pretty reasonable thing to ask, and I thought "surely it must have been answered". What is the probability that a solitaire game be winnable? Or equivalently, what is the number of solvable games? By "solitaire", let us mean Klondike solitaire of the form "Draw 3 cards, Re-Deal infinite".

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed